Last Updated on 2 years ago by

On the Internet, you sometimes have to hunt hard to locate something. It might be a specific service you require, a business, or a gift for your parents. Whatever it is, there must be a connection to it for you to locate it.

That fundamental logic underpins the Internet’s operation—interconnected entities in a global network. To browse a website, a link leading to the page you want to travel to must have existed at some point.

Consider a search engine to be a component of the equation. You can enter one or two keywords and locate a landing page related to the page you’re looking for. You may also receive a direct link from an external source, such as an email from a friend.

So, what happens when you need to get to a page but there are no links to it? What happens to a website with these sorts of pages, and how does this affect SEO? Let’s start with the name of these pages: orphan pages (OPs). Now, let’s take a closer look at what orphaned page features are and why these pages are important for your SEO analysis and content strategy.

What are Orphan Pages?

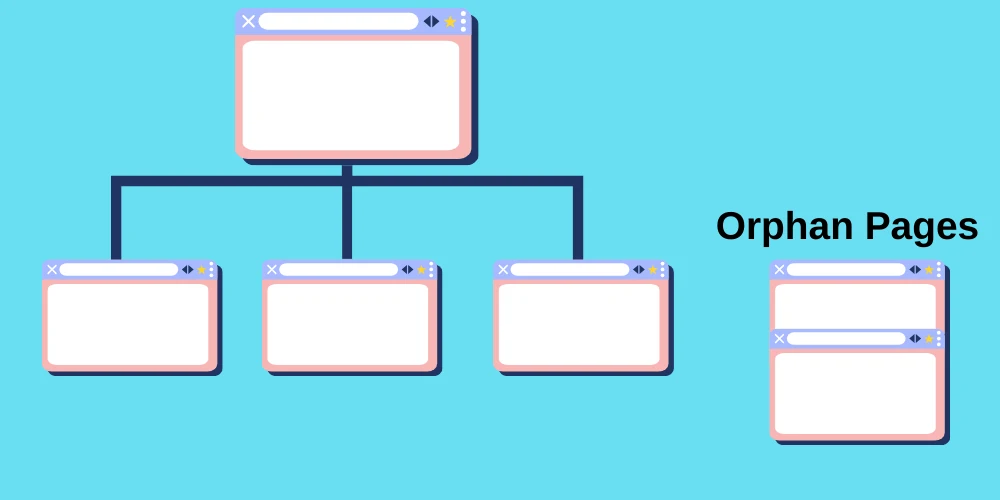

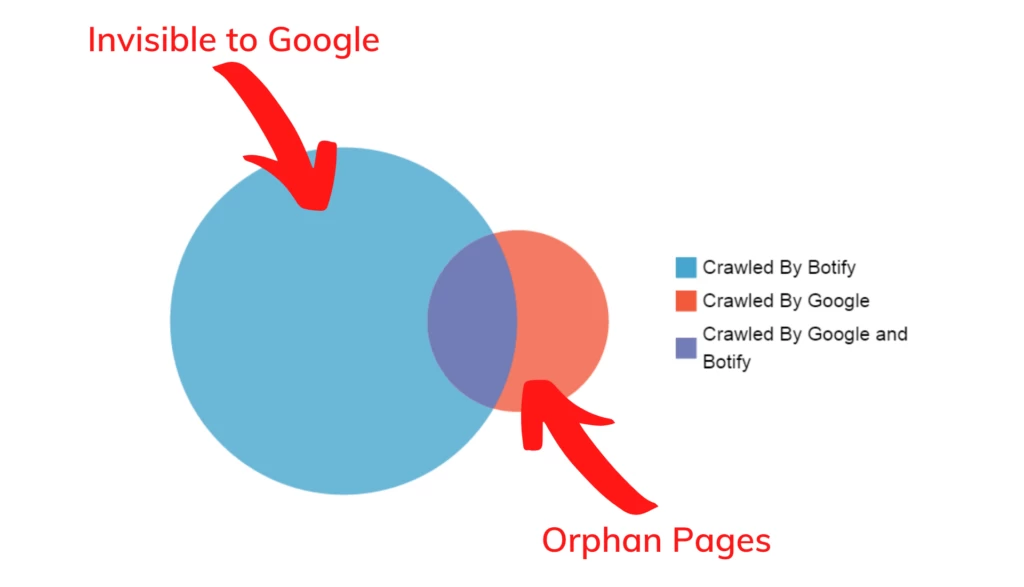

Orphan pages are those on your website that aren’t linked to any other page or area. This implies that a user cannot view the page unless they know the direct URL. Furthermore, search engine crawlers cannot follow these sites from another page, hence they are seldom indexed by search engines. Crawlers need to be able to discover your pages if they are connected to other pages. Consider it like a spider web for a spider to crawl on. The spider will have difficulty moving from one location to another if sections of it are shattered.

Most importantly, orphan pages represent lost opportunities to attract and engage consumers, which can negatively impact your bounce rate. Fortunately, losing page traffic, retention, and income, as well as harming your SEO success, is something that can be quickly fixed. Here’s how you can utilize BrightEdge to get rid of orphan pages on your website.

Orphan Pages’ Impact on SEO

Orphan pages are detrimental to SEO. The rationale is not difficult to comprehend. Search engines, such as Google, believe that a page that receives no connections from inside its domain is unimportant. That might not seem so awful at first, because most orphan pages are likewise of no relevance to the firm – they are frequently built for the very purpose of being forgotten.

Orphan pages also have a detrimental impact on the overall SEO of the website, since Google penalizes the entire page as a result of their existence. OPs are generally regarded as bad SEO.

How To Find Orphan Pages with Using ScreamingFrog

Finding orphan pages is beneficial since it can assist in identifying portions of a site or essential pages that lack internal links. This is a problem for users, as well as search engine discovery and indexing of the sites.

Orphan sites may still be indexed because they have been linked to in the past or from other sources (such as XML Sitemaps or external links), but without any internal connections, they will not be granted internal PageRank, which will affect their score and organic performance in search engines.

A limited number of OPs is typical and not often a problem; but, at scale, they can lead to index bloat and crawl budget waste, as well as competing pages or just a poor user experience if outdated pages are discovered organically by users.

This article will show you how to utilize the Screaming Frog SEO Spider to discover OPs in three different sources: XML Sitemaps, Google Analytics, and Search Console. An SEO Spider license is necessary to crawl the entire website and open up the configuration to interface with the three sources.

-

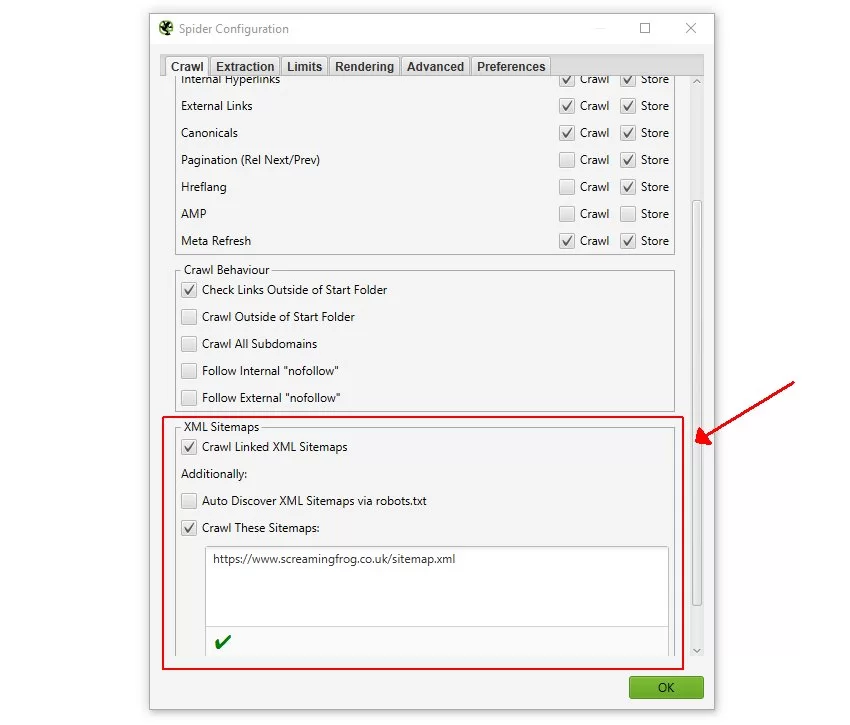

Select Crawl Linked XML Sitemaps

To crawl URLs in the XML sitemap, you can use robots.txt or specify the XML sitemap destination. This implies that any new orphan URLs discovered exclusively using the XML Sitemap will be scanned.

-

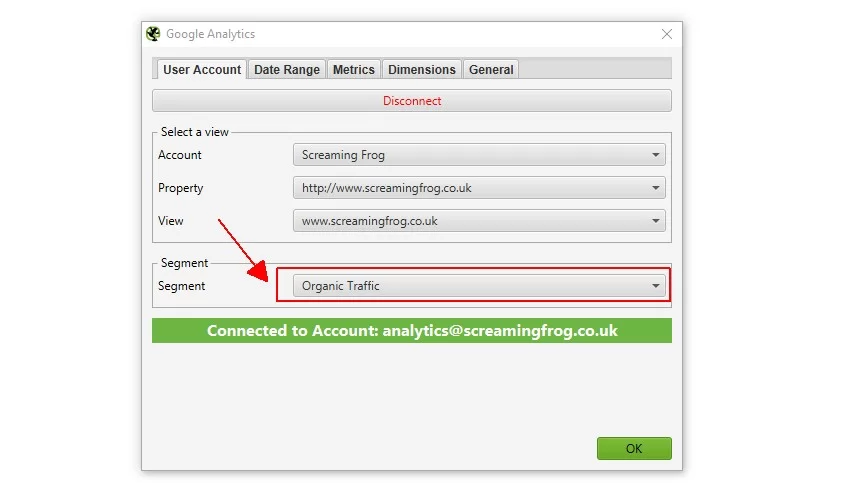

Connect to Google Analytics

During a crawl, you may connect to the Google Analytics API and extract data for a certain account, property, view, or segment. Remember to choose the ‘Organic Traffic’ category to locate orphan pages from organic search.

You may choose the period range to be examined, which should be at least a month, as well as metrics and dimensions that can be left as default. If you want to locate orphan pages from other sources, you may change the section to ‘All Users’ or ‘Paid Traffic.’

-

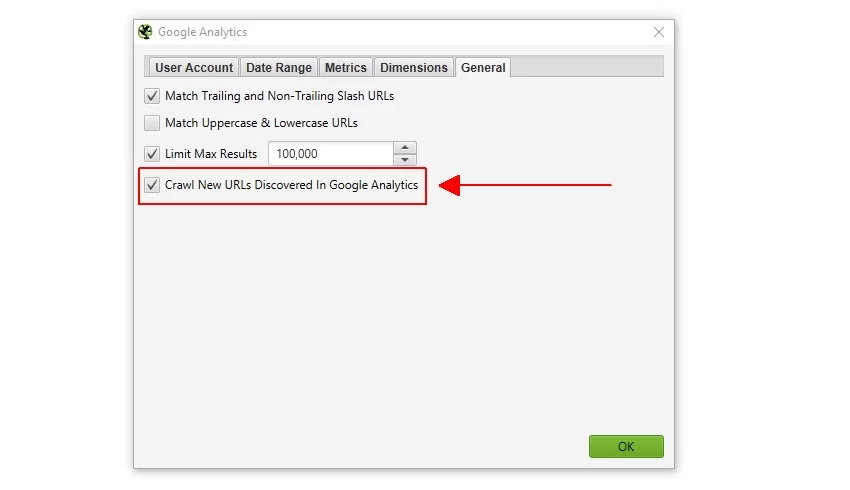

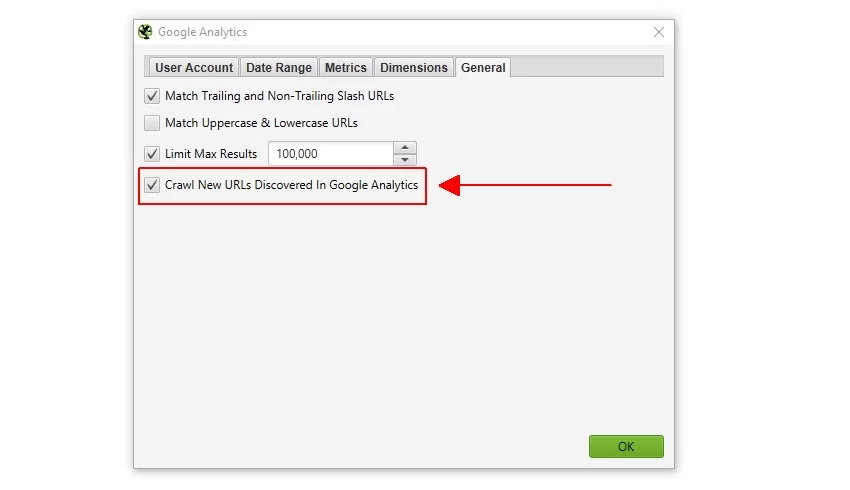

Select Crawl New URLs Discovered in GA

This setup option may be found in the Google Analytics configuration window’s ‘General’ tab (Configuration > API Access > Google Analytics).

If this option is not selected, new URLs detected by Google Analytics will only be seen in the ‘Orphan Pages’ report. They will not be put into the crawl queue, will not be shown in the user interface, and will not be display under the corresponding tabs and filters.

-

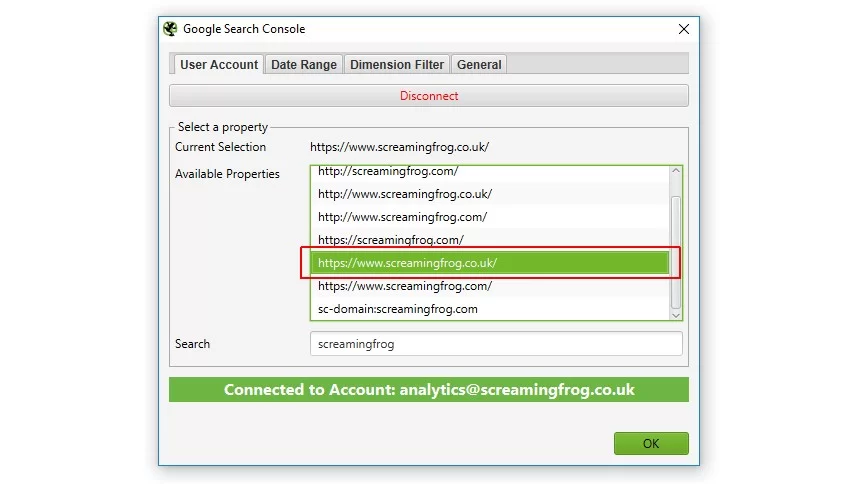

Connect to GSC

During a crawl, you may connect to the Search Analytics API and retrieve statistics like impressions, clicks, CTR, and position metrics. Simply choose the appropriate attribute to locate orphan pages that are gaining impressions from search but are not connected internally.

You may specify a period range for the data to be analyzed, which should preferably be at least a month, similar to Google Analytics.

-

Select Crawl New URLs Discovered in GSC

This setting option may be found in the Google Search Console setup window’s ‘General’ tab (Configuration > API Access > Google Search Console).

Similar to Google Analytics, if this option is not set, new URLs identified by Google Search Console will only be shown in the ‘Orphan Pages’ report. They will not be put into the crawl queue, will not be shown in the user interface, and will not be display under the corresponding tabs and filters.

-

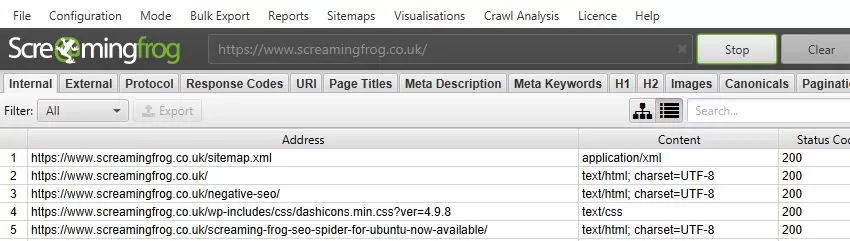

Crawl The Website

Open the SEO Spider, enter or copy the URL to crawl into the ‘Enter URL to spider’ box, then click ‘Start.’

The progress bars and API tab allow you to track the progress of the APIs and crawl. The website, as well as any new URLs detected using the XML Sitemap, Google Analytics, or Search Console, will be scanned. Wait till the crawl completes and reaches 100%.

-

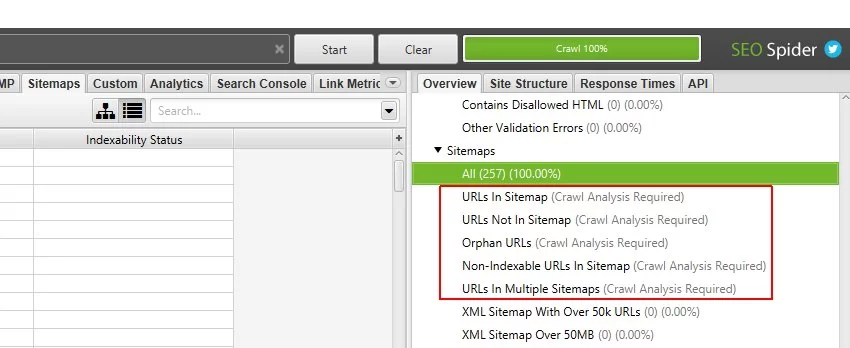

Click Start in the Crawl Analysis Section

During a crawl, the bulk of the filters in the SEO Spider is visible in real time. However, the ‘Orphan URLs’ filters under the ‘Sitemaps,’ ‘Analytics,’ and ‘Search Console’ tabs can only be examined at the end of a crawl.

They needed to be supplied with data after the ‘Crawl Analysis’ (more on this in just a moment). The right-hand ‘overview’ pane shows a ‘(Crawl Analysis Required)’ warning against filters that require data from post-crawl analysis. There are, for example, five filters under ‘Sitemaps’ that require it.

When the crawl is finished, the SEO Spider will only know which URLs are missing from an XML Sitemap and vice versa. You only need to click a button to populate these three orphan URL filters.

However, if you have already configured ‘Crawl Analysis,’ you may want to double verify that ‘Sitemaps,’ ‘Analytics,’ and ‘Search Console’ are selected in ‘Crawl Analysis > Configure.’ To expedite this phase, uncheck any other items that require post-crawl examination.

When the crawl analysis is finished, the ‘analysis’ progress bar will be at 100% and the filters will no longer display the notice ‘(Crawl Analysis Required).’ They will also be filled with data from orphan URLs!

-

Analyse ‘Orphan URLs’ Filters

You may now examine orphan pages identified by browsing each tab and its appropriate ‘Orphan URLs’ filter. On the Screaming Frog website, for example, certain orphan URLs fail and redirect from the XML sitemap.

While they aren’t actual pages, they are orphan URLs that aren’t connected to anywhere on the website. In this case, the old URLs should have been deleted from the XML sitemap.

Internal connections from other orphan sites are permitted on orphan pages. According to Search Console statistics, there are certain pages on the website that reply with a 200 response code but are not linked internally.

The ‘Analytics’ tab and ‘orphan URLs’ filter may be seen in the same way as in the previous example. The data from each of these tabs and filters may be exported using the interface’s ‘Export’ button.

-

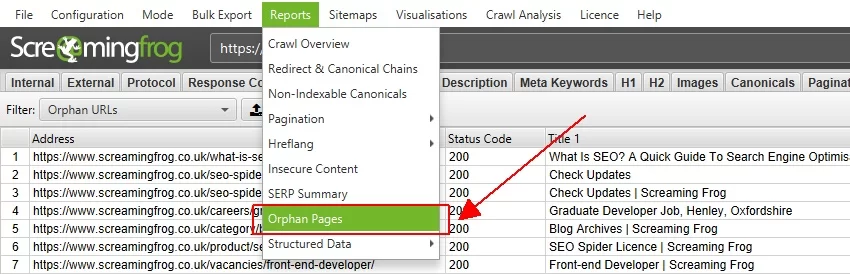

Export Orphan URLs File

Finally, if you want to export a list of all orphan pages detected, utilize the ‘Orphan Pages’ report.

The source of discovery is indicated by a ‘Source’ column next to each orphan URL. These have been shortened to ‘GA’ for Google Analytics, ‘GSC’ for Google Search Console, and ‘Sitemaps’ for XML Sitemaps.

If you connected Google Analytics and Search Console in a crawl but did not choose the ‘Crawl New URLs Discovered In GA/GSC’ box, this report will still contain data for those URLs. They simply will not have been crawled and will not display under the relevant tabs and filters.

How Do Orphan Pages Occur?

Orphan Pages are frequently created when a firm, on the one hand, no longer wants particular pages on its website, but on the other hand, wants to keep them. This may be an old blog category that you don’t want to be client-facing, for example.

The blog articles are no longer available on the website when you disable this category. They do, however, continue to exist under various URLs. The issue currently is that they are no longer linked to the website, giving rise to Orphan Pages.

Common Reasons Orphan Pages Exist

Orphan pages are commonly created for the following reasons:

- Your website’s architecture has major flaws.

- Your goods are out of stock or have been discontinued, but their product pages remain online.

- Pages that have been totally or sequentially deleted from the internal link but have not been turned off.

- For example, ‘test pages’ from the storage system may be used to execute A/B testing. When the individual in charge quits the organization, no one remembers these URLs.

- URLs from a prior CRM system were never removed fully.

- Landing pages for popular/seasonal themes were never disabled.

- Incorrect usage of the CMS resulted in the creation of pages.

- The categories that were taken offline were not transmitted.

- During a move, pages were simply ‘forgotten.’

- And a slew of additional reasons.

How to Avoid Orphan Pages?

Naturally, you don’t want to erase old postings if you still want to keep their SEO trust. Simultaneously, you want the old content to be client-facing. Rather than converting them to Orphan Pages, it is preferable to link to them from elsewhere on the website. Please keep in mind that a single link on a single page is insufficient since Google will still detect a rat.

The easiest approach to avoiding orphan sites is to have internal links on all of your web pages. At the same time, be certain that the connection is relevant. For example, if a website discusses on-page SEO, it should only link to SEO-related pages.

Make it a habit to identify any OPs on your site regularly to guarantee there are none. Replace any internal links to pages that no longer exist with new ones.

Why are Orphan Pages Bad for SEO?

When low-quality pages consume a substantial portion of your crawl budget, you wind up paying more resources simply to get Google to access the crucial pages. Furthermore, if Google cannot identify natural links between the pages on your website, its crawl pace slows. That being said, even if you have fantastic content, it will not be a ranking factor.

While undesirable OPs have an impact on SEO, so do orphans which are intended to be beneficial. OPs with no inbound connections harm the whole site. Link health is a basis of SEO and one of the numerous variables used to rank websites. There would be no benefit in the ranking system if search engines did not determine the excellent and bad of each website on the internet. The importance of site relevancy would vanish. An orphan page that is useful to people is a lost chance to draw visitors to your site.

Google dislikes it when pages on a website have no relevance to one another. This is because, in the past, people attempted to conceal pages from Google in this manner. This method is frequently employed by so-called Black Hat SEOs — individuals who employ tricks that violate Google’s guidelines to increase keyword ranks.

Google thinks that the web administrator does not want certain pages on the website to be found by users. They do, however, continue to exist for some reason. Previously, this approach was known as Ghost Pages – a Black Hat Technique of concealing pages in a website that only search engines should be able to see, but are invisible to users. In conclusion, OPs must be avoided at all costs.

How Do I Fix Orphan Pages?

So you’ve discovered the OPs that need to be fixed. But, before you can fix them, think about why these pages become orphans in the first place, so the problem doesn’t reoccur. For example, did your content staff overlook the fact that the page still exists instead of creating a redirect for it? Taking this step now to identify and apply redirect and internal linking principles will help you in the future by reducing the possibility of additional orphan occurrences.

Orphan pages are classified into two types:

- The anticipated orphan pages Typically, you do not need to be concerned about

- The unexpected orphan pages about which you should be worried

The path you follow to fix your OPs will be determined by their type.

Repairing an orphan page may be less difficult than you think. Furthermore, your resolve should be based on the function of each orphaned website page and how it might assist you in achieving your marketing and conversion objectives. Here are some typical resolution options:

- Link to your OPs from other internal web pages on your website, especially if your target audience must view the OPs while surfing your website.

- If you no longer require orphaned pages, archive them.

- Allow an SEO agency to take care of your orphan pages.